Detection of Extraneous Visual Signals Does Not Reveal the Syntactic Structure of German Sign Language (DGS)

Abstract

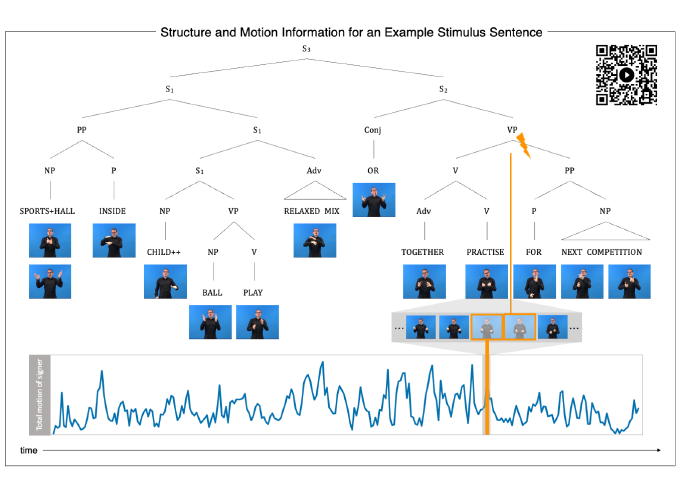

Sentences are not just mere strings of words or signs but manifest a complex internal structure. Linguistic research has demonstrated that sign languages and spoken languages both exhibit hierarchical constituent structure which determines how individual elements in a sentence relate to each other. Here, we report the first adaptation of the psycholinguistic “click” paradigm, which aims to demonstrate the relevance of hierarchical constituent structure during auditory language processing, to the visuo-spatial modality of sign languages. We performed two independent online experiments: The main experiment with a group of 53 deaf signers using German Sign Language (DGS) as their primary means of communication and a control experiment with a group of 53 hearing non-signers. Both groups were shown videos of syntactically complex sentences in DGS. A white flash (mimicking the “click” in the auditory domain) to which participants had to respond could occur as an overlay to the video at different levels in the constituent structure. Our pre-registered inferential analyses yielded no effect for our syntactic manipulations, neither in the group of signers nor in the group of non-signers. Additional exploratory analyses suggest general effects of attention during the processing of communicative signals, as even the group of non-signers’ behaviour was influenced by non-manual cues despite their lack of knowledge of DGS. We conclude that the simultaneous and time-shifted presence of different syntax-relevant cues (i.e., hands, mouthings, and non-manuals) makes the sign stream robust against disruption by extraneous visual signals and argue that non-signers attend to some non-manual cues due to their resemblance of communicative gestures.