The core of language is modality-independent: Evidence from functional and diffusion MRI with deaf signers

Reproduction of Figure 1 from accepted abstract.

Reproduction of Figure 1 from accepted abstract.

The core of language is modality-independent: Evidence from functional and diffusion MRI with deaf signers

Resumen

An influential school of thought in theoretical linguistics (Adger, 2021; Chomsky, 1995; Chomsky et al., 2023) stipulates that the core computational machinery for language—the ability to hierarchically combine lexical items—is modality‑independent. On this view, speech is merely one output channel for representations generated by an internal syntactic system independent from externalization (Berwick et al., 2013; Berwick & Chomsky, 2016; Friederici et al., 2017). Against this background, sign languages (for reviews see Emmorey, 2021, 2023; Hickok et al., 1998) constitute a decisive test case: If the combinatorial core is truly modality‑independent, deaf native signers should recruit the same cortical network for this process previously observed in hearing users of a spoken language.

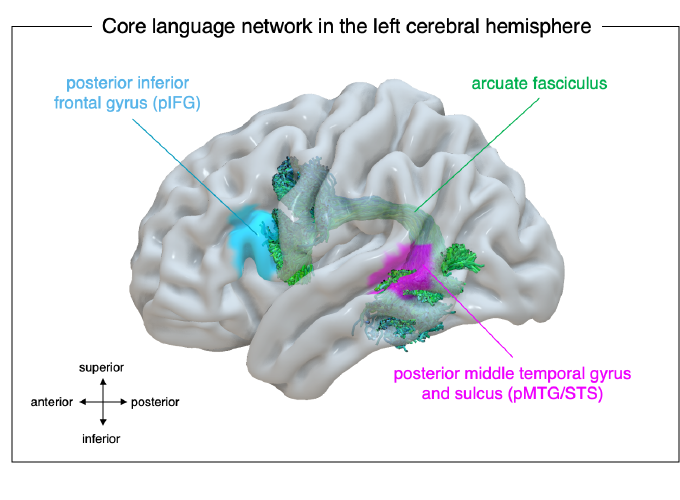

A recent fMRI study by Trettenbrein et al. (2024) set out to test the supposed overlap between syntax-relevant brain regions and their function in deaf signers and hearing users of a spoken language. The authors generated meta‑analytic association test maps from 169 fMRI studies of spoken and written language to define syntax‑selective functional regions of interest in a purely da-ta-driven manner (Figure 1). Then, deaf native signers of German Sign Language (DGS) and a control group of hearing non‑signers were scanned while viewing signed sentences and lexically matched sign lists, including pseudoword‑like stimuli from a sign language unknown to the deaf participants. Only deaf signers showed the following condition‑sensitive recruitment of regions of interest: The left posterior inferior frontal gyrus (pIFG) and posterior middle temporal gyrus and sulcus (pMTG/ STS) were both involved in combinatorial processes in DGS, whereas only the former responded exclusively to DGS and not the unfamiliar sign language.

Moreover, a recent study using dMRI by Trettenbrein et al. (2025) set out to identify potential structural difference in a set of language-relevant white-matter pathways which may be caused by differences in the modality of language use by comparing deaf native signers with a hearing control group. Replicating earlier work (Cheng et al., 2019; Finkl et al., 2019), the authors observed no macro‑ or micro‑structural group differences in the left arcuate fasciculus (Figure 1), indicating that the white‑matter backbone of syntax connecting the pIFG to the pMTG/STS (Trettenbrein & Friederici, 2025) is modality‑invariant. In contrast, several right‑hemispheric fiber tracts exhibited structural differences between the groups, specifically pathways that have previously been linked to visuospatial processing which is, of course, essential for processing sign lan-guages.

In sum, the findings in these two recent studies provide convergent functional and structural evidence that the adult human brain implements a primarily left-hemispheric fronto-temporal syntactic‑combinatorial network that does not depend on speech. Modality‑specific variation seems to affect only how linguistic structure is externalized—not where it is computed—supporting the view that the computational core of language is architecturally and evolutionarily independent from the different sensory‑motor channels that can be used for externalization.