A roadmap for technological innovation in multimodal communication research

A roadmap for technological innovation in multimodal communication research

Resumen

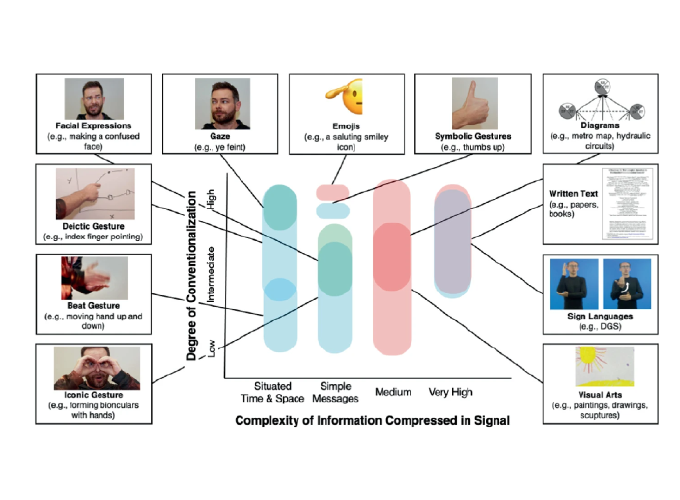

Multimodal communication research focuses on how different means of signalling coordinate to communicate effectively. This line of research is traditionally influenced by fields such as cognitive and neuroscience, human-computer interaction, and linguistics. With new technologies becoming available in fields such as natural language processing and computer vision, the field can increasingly avail itself of new ways of analyzing and understanding multimodal communication. As a result, there is a general hope that multimodal research may be at the “precipice of greatness” due to technological advances in computer science and resulting extended empirical coverage. However, for this to come about there must be sufficient guidance on key (theoretical) needs of innovation in the field of multimodal communication. Absent such guidance, the research focus of computer scientists might increasingly diverge from crucial issues in multimodal communication. With this paper, we want to further promote interaction between these fields, which may enormously benefit both communities. The multimodal research community (represented here by a consortium of researchers from the Visual Communication [ViCom] Priority Programme) can engage in the innovation by clearly stating which technological tools are needed to make progress in the field of multimodal communication. In this article, we try to facilitate the establishment of a much needed common ground on feasible expectations (e.g., in terms of terminology and measures to be able to train machine learning algorithms) and to critically reflect possibly idle hopes for technical advances, informed by recent successes and challenges in computer science, social signal processing, and related domains.